Much of the historical and philosophical analysis (e.g., Engelhard, Fisher) from the Rasch camp has followed the notion that Rasch’s principles and methods flow naturally and logically from the best measurement thinking (Thurstone, Binet, Guttman, Terman, et al.) of the early 20th century and beyond. From this very respectable and defensible perspective, Rasch’s contribution was a profound, but normal progression based on this earlier work and provided the tools to deal with the awkward measurement problems of the time, e.g. validity, reliability, equating. Before Rasch, the consensus was the only forms that could be equated were those that didn’t need equating.

When I reread Thomas Kuhn’s “The Structure of Scientific Revolutions” I was led to the conclusion that Rasch’s contribution rises to the level of a revolution, not just a refinement of earlier thinking or elaboration of previous work. It is truly a paradigm shift, although Kuhn didn’t particularly like the phrase (and it probably doesn’t appear in my 1969 edition of “Structure”.) I don’t particularly like it because it doesn’t adequately differentiate between “new paradigm” and “tweaked paradigm”; in more of Kuhn’s words, a new world, not just a new view of an old world.

To qualify as a Kuhnian Revolution requires several things: the new paradigm, of course, which needs to satisfactorily resolve the anomalies that have accumulated under the old paradigm, which were sufficient to provoke a crisis in the field. It must be appealing enough to attract a community of adherents. To attract adherents, it must solve enough of the existing puzzles to be satisfying and it must present some new ones to send the adherents in a new direction and give them something new to work on.

One of Kuhn’s important contributions was his description of “Normal Science,” which is what most scientists do most of the time. It can be the process of eliminating inconsistencies, either by tinkering with the theory or by disqualifying observations. It can be clarifying details or bringing more precision to the experimentation. It can be articulating implications of the theory, i.e., if that is, then this must be. We get more experiments to do and other hypotheses to proof.

Kuhn described this process as “Puzzle Solving,” with, I believe, no intent of being dismissive. These fall into the rough categories of tweaking the theory, designing better experiments, or building better instruments.

The term “paradigm” wasn’t coined by Kuhn but he certainly brought it to the fore. There has been a lot of discussion and criticism since of the varied and often casual ways he used the word but it seems to mean the accepted framework within which the community who accept the framework perform normal science. I don’t think that is as circular as it seems.

The paradigm defines the community and the community works on the puzzles that are “normal science” under the paradigm. The paradigm can be ‘local’ existing as an example or, perhaps even an exemplar of the framework. Or it can be ‘global.’ Then it is the view that defines a community of researchers and the world view that holds that community together. This requires that it be attractive enough to divert adherents from competing paradigms and that it be open-ended enough to give them issues to work on or puzzles to solve.

If it’s not attractive, it won’t have adherents. The attraction has to be more than just able to “explain” the data more precisely. Then it would just be normal science with a better ruler. To truly be a new paradigm, it needs to involve a new view of the old problems. One might say, and some have, that after, say, Aristotle and Copernicus and Galileo and Newton and Einstein and Bohr and Darwin and Freud, etc., etc., we were in a new world.

Your paradigm won’t sell or attract adherents if it doesn’t give them things to research and publish. The requirement that the paradigm be open-ended is more than marketing. If it’s not open-ended, then it has all the answers, which makes it dogma or religion, not science.

Everything is fine until it isn’t. Eventually, an anomaly will present itself that can’t be explained away by tweaking the theory, censoring the data, or building a better microscope. Or perhaps, anomalies and the tweaks required to fit them in become so cumbersome, the whole thing collapses of its own weight. When the anomalies become too obvious to dismiss, too significant to ignore, or too cumbersome to stand, the existing paradigm cracks, ‘normal science’ doesn’t help, and we are in a ‘crisis’.

Crisis

The psychometric new world may have turned with Lord’s seminal 1950 thesis. (Like most of us at a similar stage, Lord’s crisis was he needed a topic that would get him admitted into the community of scholars.) When he looked at a plot of item percent correct against total number correct (the item’s characteristic curve), he saw a normal ogive. That fit his plotted data pretty well, except in the tails. So he tweaked the lower end to “explain” too many right answers from low scorers. The mathematics of the normal ogive are, at least, cumbersome and, in 1950, computationally intractable. So that was pretty much that, for a while.

In the 1960s, the normal ogive morphed into the logistic, perhaps the idea came from following Rasch’s (1960) lead, perhaps from somewhere else, perhaps due to Birnbaum (1968); I’m not a historian and this isn’t a history lesson. The mathematics were a lot easier and computers were catching up. The logistic was winning out but with occasional retreats to the the normal ogive because it fit a little better in the tails .

US psychometricians saw the problem as data fitting and it wasn’t easy. There were often too many parameters to estimate without some clever footwork. But we’re clever and most of those computational obstacles have been overcome to the satisfaction of most. The nagging questions remaining are more epistemological than computational.

Can we know if our item discrimination estimates are truly indicators of item "quality" and not loadings on some unknown, extraneous factor(s)?

If the lower asymptote is what happens at minus infinity where we have no data and never want to have any, why do we even care?

If the lower asymptote is the probability of a correct response from an examinee with infinitely low ability, how can it be anything but 1/k, where k is the number of response choices?

How can the lower asymptote ever be higher than 1/k? (See Slumdog Millionaire, 2008)

If the lower asymptote is population-dependent, isn't the ability estimate dependent on the population we choose to assign the person to? Mightn't individuals vary in their propensity to respond to items they don't know.

Wouldn't any population-dependent estimate be wrong on the level of the individual?

If you ask the data for “information” beyond the sufficient statistics, not only are your estimates population-dependent, they are subject to whatever extraneous factors that might separate high scores from low scores in that population. This means sacrificing validity in the name of reliability.

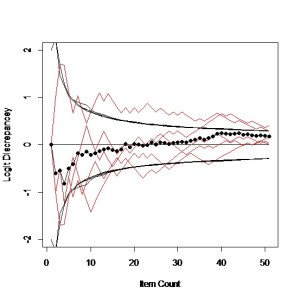

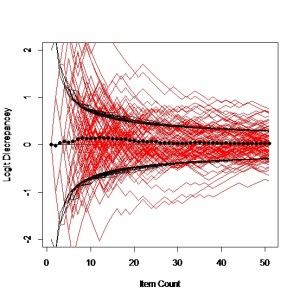

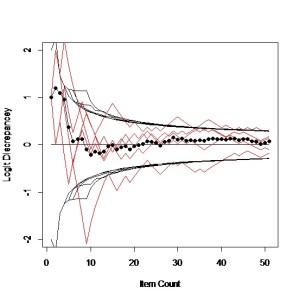

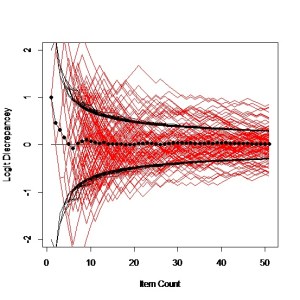

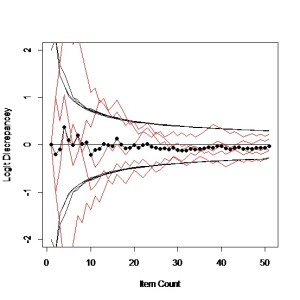

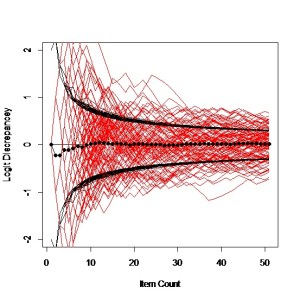

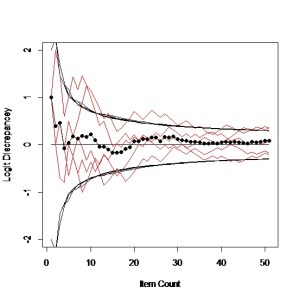

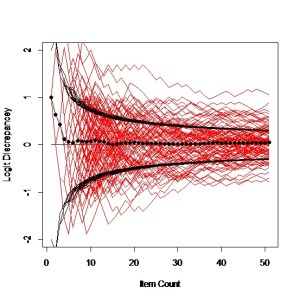

Rasch did not see his problem as data fitting. As an educator, he saw it directly: more able students do better on the set tasks than less able students. As an associate of Ronald Fisher (either the foremost statistician of the twentieth century who also made contributions to genetics or the foremost geneticist of the twentieth century who also made contributions to statistics), Rasch knew about logistic growth models and sufficient statistics. Anything left in the data, after reaping the information with the sufficient statistics, should be noise and should be used to control the model. The size of the residuals isn’t as interesting as the structure, or lack thereof.¹

Rasch Measurement Theory certainly has its community and the members certainly adhere and seem to find enough to do. Initially, Rasch found his results satisfying because it got him around the vexing problem of how to assess the effectiveness of remedial reading instruction when he didn’t have a common set of items or common set of examinees over time. This led him to identify a class of models that define Specific Objectivity.

Rasch’s crisis (how to salvage a poorly thought-out experiment) hardly rises to the epic level of Galileo’s crisis with Aristotle, or Copernicus’ crisis with Ptolemy, or Einstein’s crisis with Newton. A larger view would say the crisis came about because the existing paradigms did not lead us to “measurement”, as most of science would define it.

In the words of William Thomson, Lord Kelvin:

“When you can measure what you are speaking about and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of a meager and unsatisfactory kind.“

Revolution

Rasch’s solution did change the world for any adherents who were willing to accept his principles and follow his methods. They now knew how to ‘equate’ scores from disparate instruments, but beyond that, how to develop scales for measuring, define constructs to be measured, and do better science.

Rasch’s solution to his problem in the 1950s with remedial reading scores is still the exemplar and “local” definition of the paradigm. His generalization of that solution to an entire class of models and his exposition of “specific objectivity” are the “global” definition. (Rasch, 1960)

There’s a problem with all this. I am trying to force fit Rasch’s contribution into Kuhn’s “Structure of Scientific Revolutions” paradigm when Rasch Measurement admittedly isn’t science. It’s mathematics, or statistics, or psychometrics; a tool, certainly a very useful tool, like Analysis of Variance or Large Hadron Colliders.

Measures are necessary precursors to science. Some of the weaknesses in pre-Rasch thinking about measurement are suggested in the following koans, hoping for enlightened measurement, not Zen enlightenment.

"Whatever exists at all exists in some amount. To know it thoroughly involves knowing its quantity as well as its quality." E. L. Thorndike

"Within the range of objects for which the measuring instrument is intended, its function must be independent of the object of measurement." L. L. Thurstone

"You never know a line is crooked unless you have a straight one to put next to it." Socrates

"Correlations are population-dependent, and therefore scientifically rather uninteresting." Georg Rasch

"We can act as though measured differences along the latent trait are distances on a river but whoever is piloting better watch for meanders and submerged rocks."

"We may be trying to measure size; perhaps height and weight would be better. Or perhaps, we are measuring 'weight', when we should go after 'mass'"

"The larger our island of knowledge, the longer our shoreline of wonder." Ralph W. Sockman

"The most exciting thing to hear in science ... is not 'Eureka' but 'That's funny.'" Isaac Asimov

1A subtitle for my own dissertation could be “Rasch’s errors are my data.”